Melanie Weber

Assistant Professor

Harvard University

Biography

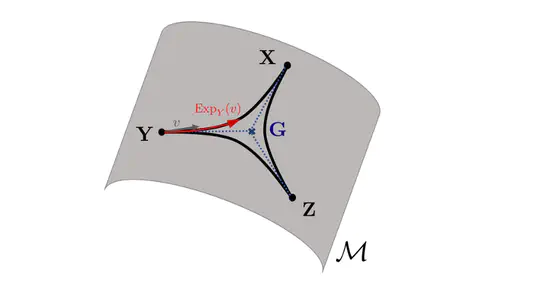

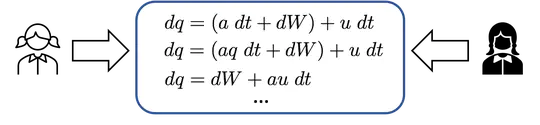

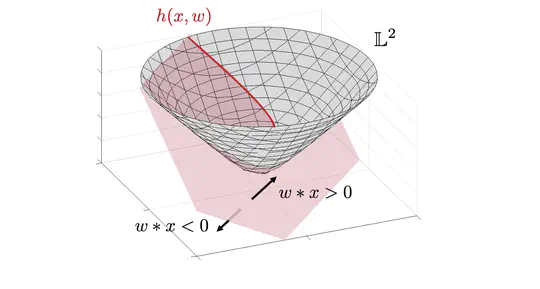

I am an Assistant Professor of Applied Mathematics and of Computer Science at Harvard, where I lead the Geometric Machine Learning Group. My research focuses on utilizing geometric structure in data for the design of efficient Machine Learning and Optimization methods with provable guarantees. This AI Magazine article surveys Geometric Machine Learning, including my work within this area.

In 2021-2022, I was a Hooke Research Fellow at the Mathematical Institute in Oxford and a Nicolas Kurti Junior Research Fellow at Brasenose College. In Fall 2021, I was a Research Fellow at the Simons Institute in Berkeley, where I participated in the program Geometric Methods for Optimization and Sampling. Previously, I received my PhD from Princeton University (2021) under the supervision of Charles Fefferman, held visiting positions at MIT and the Max Planck Institute for Mathematics in the Sciences and interned in the research labs of Facebook, Google and Microsoft.

My research is supported by the National Science Foundation, the Sloan Foundation, the Aramont Foundation, the Harvard Dean’s Fund and the Harvard Data Science Initiative.

- Data Geometry

- Graph Machine Learning

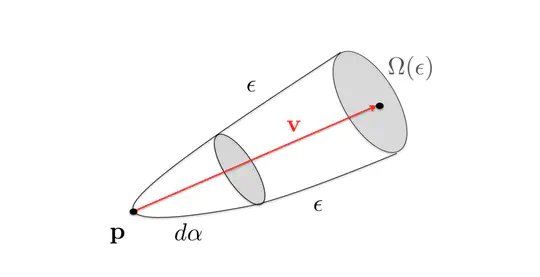

- Optimization on Manifolds

- Machine Learning in Non-Euclidean spaces

- Label- and Resource-efficient Learning

-

PhD in Applied Mathematics, 2021

Princeton University

-

BSc/MSc in Mathematics and Physics, 2016

University of Leipzig

Featured Publications

Recent Publications

Projects

Contact

- mweber@seas.harvard.edu

- Office hours by appointment. If you are a Harvard undergraduate or graduate student interested in working with me, please send me an email. Due to high email volume, I am unable to reply to most emails from non-Harvard students regarding admission, supervised projects or internships.